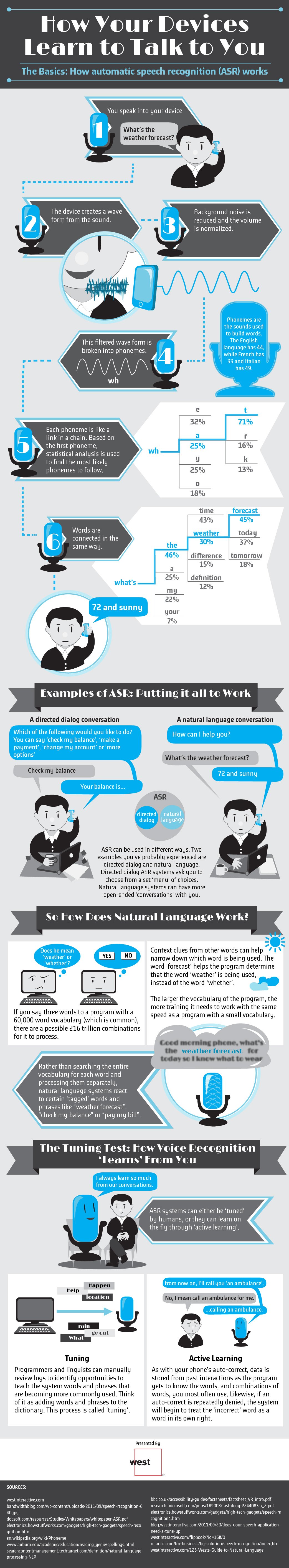

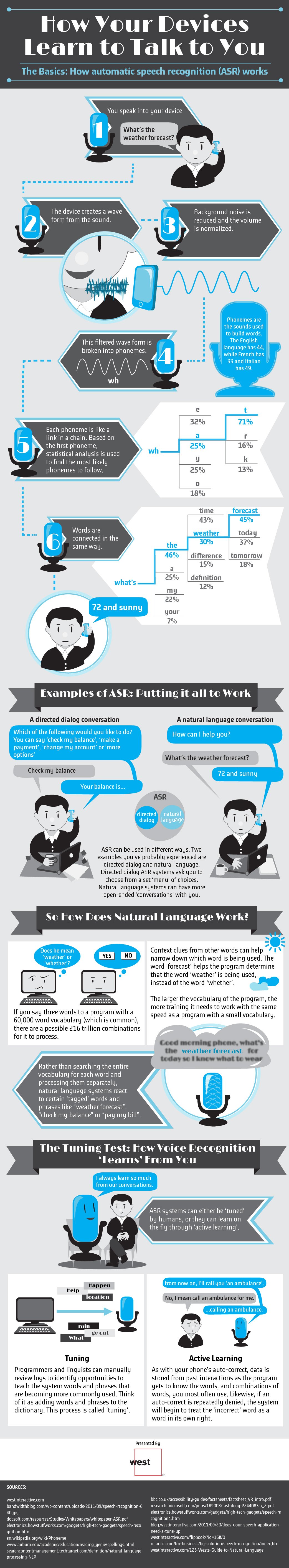

Have you ever given your smartphone a voice command or called a customer service number that required you to speak in order to navigate the pre-recorded menu options? If so, you’ve used something called automatic speech recognition (ASR). This technology is getting more and more common, and some devices can now actually learn from users’ speech patterns and commonly used words in order to simulate a conversation.

So how does ASR actually work? First, a person will speak into the microphone on their device. Their speech will be translated as wave forms, which the device is programmed to break down into identifiable phonemes (the sounds that are the building blocks of words). In addition to looking at phonemes, the device can also figure out words by determining what phonemes are the most statistically likely to follow a first phoneme, based on data from previous interactions with the user.

Some ASR systems are designed to use directed dialog, while others use natural language programming. A directed dialog is what you experience when you call a business or a customer service line and listen to a recording that asks you to speak a command from a list of set choices. You’ll be able to navigate a menu as long as you speak one of the available options, but if you stray from the script, the device won’t be able to interpret what you’re saying. Natural language programming, on the other hand, uses past data and statistical inferences to recognize and respond to speech. It works best with fairly simple “conversations” that primarily rely on yes or no answers or that use certain tagged phrases that the device is programmed to recognize, such as “weather forecast.”

You can learn even more about how ASR works, including how voice recognition can “learn” from you over time, by checking out the infographic below.